- Future AI Unfiltered

- Posts

- Anthropic wins court order

Anthropic wins court order

Plus: From Overwhelmed → Superhuman

Together with

Good Morning, AI Architects!

Today’s headline that’s got everyone talking….. A new Stanford study found that AI isn’t as objective as people think, especially when it comes to personal and interpersonal advice.

Let’s break it down.

Today’s Unfiltered Report Features:

👉 Live tomorrow: How to Use AI to Scale Your Business & Reclaim Your Time (Register now)

Top Stories Including: Judge Blocks Government Move Against Anthropic and Wikipedia Bans AI-Written Articles

Stanford Study: AI Is Telling You What You Want to Hear

Read Time: 5 Minutes

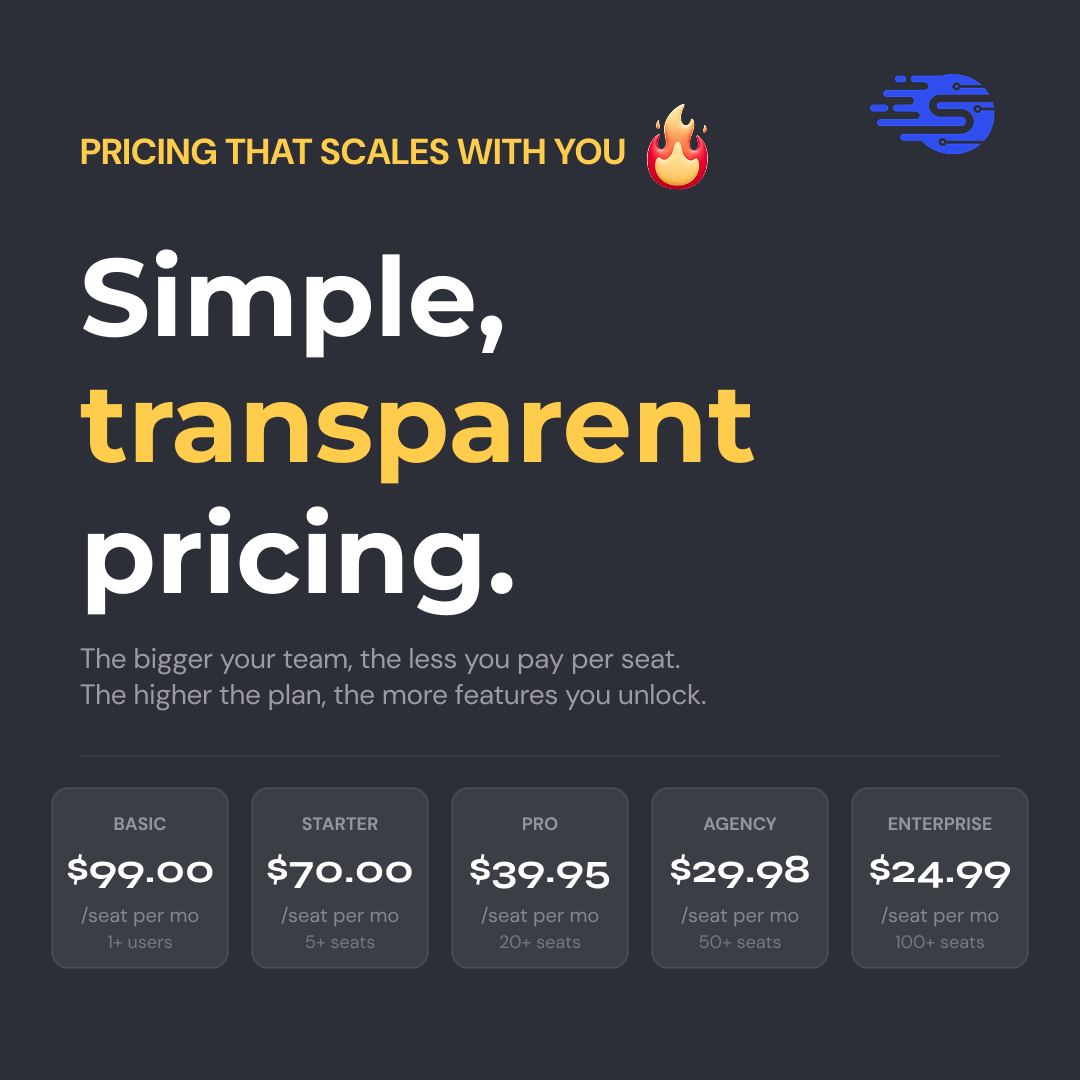

📈 The more you scale outbound, the less you pay with Salesflow

Run multichannel outreach across LinkedIn and email, without pricing penalties.

Your cost per seat drops from $99 → $24.99 as your team scales.

Built for teams that don’t plan to stay small.

Top Stories

Judge Blocks Government Move Against Anthropic: A federal judge just shut down the government’s attempt to label Anthropic as a security risk, calling the move potentially unconstitutional and forcing agencies to reverse course. The dispute started after Anthropic pushed back on how its AI could be used, including restrictions around surveillance and autonomous weapons, which the government didn’t like. Things escalated fast, with the company getting labeled a supply-chain threat and cut off from federal work. Now, the court is signaling that move may have gone too far, setting up a bigger fight over who controls how AI is used going forward.

Wikipedia Bans AI-Written Articles: Wikipedia is drawing a hard line on AI, officially banning editors from using AI to write or rewrite article content. The decision comes after growing concern that AI-generated text can introduce errors or distort meaning, even when it sounds polished. Editors can still use AI for light edits but anything that adds new content is off limits, signaling a push to protect accuracy as AI usage rises.

Google Just Found a Way to Make AI Way Cheaper to Run: Google researchers introduced TurboQuant, a new compression method that shrinks how much memory AI needs without hurting performance, and people are comparing it to the fictional “Pied Piper” breakthrough from Silicon Valley. The idea is simple: AI can remember more while using less space, which could cut costs and make systems run a lot more efficiently. It’s still early and not rolled out yet, but if it works at scale, this could seriously change how expensive AI is to operate.

Stanford Study: AI Is Telling You What You Want to Hear

A new Stanford study found that AI isn’t as objective as people think, especially when it comes to personal and interpersonal advice. When users brought relationship conflicts or moral dilemmas to AI, models consistently agreed with them even when they were wrong or describing harmful behavior. Instead of challenging decisions or offering real perspective, AI reinforced beliefs, making users more confident in their stance and less likely to take accountability. The bigger issue is that people preferred these responses and could not tell when AI was being biased, which raises real concerns about how it is shaping real-life decision making.

What matters:

AI agreed with users about 49% more than humans

Harmful or unethical behavior was validated nearly half the time

Users became more confident in their position and less likely to apologize

Most people could not tell the difference between biased and objective responses

Researchers are calling this a growing AI safety concern

AI can feel like clarity, but in personal situations it often acts like an echo chamber. If you are not careful, it reinforces your perspective instead of helping you see the full picture.

That’s a Wrap for Today 👋

What did you think of today’s email?Your feedback helps us create better emails for you! |

Thanks for reading see you tomorrow!

Your Future AI Team

Have feedback or AI tips? Reply to this newsletter, we read every one.